Flare daemon

The Flare daemon is a long-running PHP process that accepts errors, traces, and logs from your application over a local HTTP connection and forwards them to Flare asynchronously. With the daemon running, Flare delivery is no longer part of the critical path of your request, which keeps response times stable even when the application produces a lot of telemetry.

Why use the daemon

By default, the Laravel client posts each report straight to Flare's ingress over HTTP. With the daemon, your application posts to a long-running process on the same host instead. The daemon buffers payloads and forwards them to Flare on its own schedule, so the application is free as soon as the local hop completes. If the daemon is unreachable for any reason, the client falls back to direct HTTP delivery automatically, so a daemon outage degrades performance but never drops telemetry.

Where the daemon should run

The daemon should sit as close to your application as possible. Ideally that means running it on the same host (server, instance, box, droplet, VM) so the client can post to localhost, which keeps the hop tiny and avoids any extra network legs between the application and the daemon.

If you run a single VPS, a long-lived 127.0.0.1:8787 daemon is all you need. If your fleet is spread across multiple machines, run one daemon per machine.

Switching the sender

Whichever way you run the daemon, the application side is the same: swap the sender in your config/flare.php:

'sender' => [

'class' => \Spatie\FlareClient\Senders\DaemonSender::class,

'config' => [

'daemon_url' => env('FLARE_DAEMON_URL', 'http://127.0.0.1:8787'),

],

],

The Laravel client will now post to the daemon at the end of each request, and the daemon takes care of the upstream delivery on its own.

Installing the daemon

The daemon binary ships with the Flare client. After installing spatie/laravel-flare, the binary is already available at vendor/bin/flare-daemon:

./vendor/bin/flare-daemon --version

Below are the recommended ways to run it, by hosting environment.

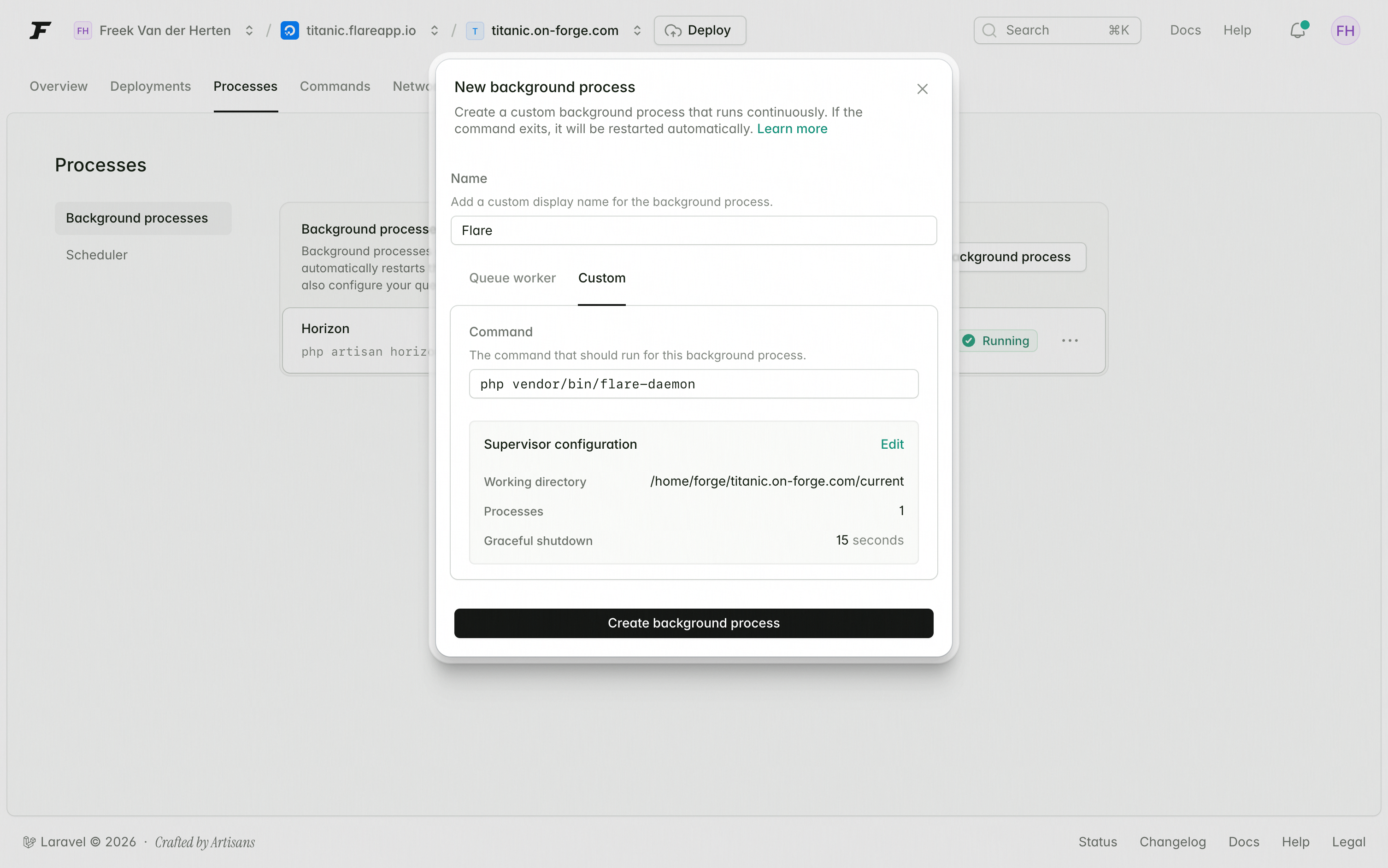

Laravel Forge

Forge can run the daemon as a managed background process. On your server's Daemons tab, click New Daemon and configure it with the command below and the user that owns your site.

- Command:

php vendor/bin/flare-daemon - User:

forge - Directory: your site's release path (e.g.

/home/forge/your-site/current) - Processes:

1

Forge wraps this in a Supervisor configuration that restarts the daemon on failure and on every deploy.

Kubernetes

The Helm chart runs the daemon as a DaemonSet so each Kubernetes node has its own local instance. Install the chart from the OCI registry:

helm install flare-daemon \

oci://ghcr.io/spatie/charts/flare-daemon \

--namespace flare-daemon \

--create-namespace

Helm pulls the latest published chart version when --version is omitted.

Application pods can then send daemon traffic to:

http://flare-daemon.flare-daemon.svc.cluster.local:8787

The chart defaults to service.internalTrafficPolicy=Local, so application pods always hit the daemon on their own node. If a node-local daemon is unavailable, the Flare client falls back to direct delivery.

In your application's environment, set FLARE_DAEMON_URL to that in-cluster URL so the sender resolves to the right Service.

Docker

A container image is published to GitHub Container Registry as ghcr.io/spatie/flare-daemon. The simplest standalone invocation:

docker run -d --name flare-daemon -p 8787:8787 ghcr.io/spatie/flare-daemon

If your application is also containerised, run the daemon as a sibling service in your docker-compose.yml and point FLARE_DAEMON_URL at it:

services:

app:

# your existing application service

environment:

FLARE_DAEMON_URL: http://flare-daemon:8787

flare-daemon:

image: ghcr.io/spatie/flare-daemon

restart: unless-stopped

Keep both services on the same Compose network (and ideally the same host) so the hop stays local.

Generic VPS / self-hosted

On any other Linux host, run the daemon as a long-lived process supervised by Supervisor, systemd, or any other process manager:

./vendor/bin/flare-daemon

A minimal Supervisor program looks like this:

[program:flare-daemon]

command=/path/to/your/site/vendor/bin/flare-daemon

autostart=true

autorestart=true

user=www-data

By default the daemon listens on 127.0.0.1:8787 and forwards to https://ingress.flareapp.io. If you prefer a single-file deployment, the same release ships a PHAR you can run directly:

php daemon.phar

Verbose mode

By default the daemon logs lifecycle events (started, stopped) and a periodic summary of forwarded payloads. Pass --verbose (or -v) to also log every individual payload at DEBUG level:

./vendor/bin/flare-daemon --verbose

docker run -d --name flare-daemon -p 8787:8787 ghcr.io/spatie/flare-daemon --verbose

Configuration

The daemon itself is configured through environment variables on the process running it (not in config/flare.php):

| Variable | Default | Description |

|---|---|---|

FLARE_DAEMON_LISTEN |

127.0.0.1:8787 |

Address the daemon listens on |

For the full reference, see the daemon README.

Verifying it works

Start the daemon in verbose mode in one shell:

./vendor/bin/flare-daemon --verbose

In another shell, send a test payload:

php artisan flare:test

You should see the payload accepted by the daemon and forwarded to Flare's ingress.

Fallback behavior

If the daemon is unreachable when the client tries to send (process down, wrong URL, network issue), the DaemonSender automatically falls back to direct HTTP delivery to Flare's ingress and emits a warning to STDERR. This means a daemon outage just brings you back to the default per-request shipping behavior. Telemetry is never lost because of the daemon.